DeepSeek has introduced its latest AI model family, DeepSeek-V4, quietly stepping up its presence in an increasingly competitive global market.

The update brings two versions—V4 Pro and V4 Flash—each designed with a slightly different purpose, but both focused on improving how AI handles coding, reasoning, and task-driven workflows.

This is the company’s first major release since its R1 model earlier in 2025. With V4, DeepSeek is continuing to build on its open-weight approach, aiming to offer a credible alternative to the closed systems that currently dominate the space.

One of the more notable upgrades is the model’s ability to handle extremely large inputs. Both versions support a context window of up to one million tokens, which allows them to process and work through far more information in a single run than most existing models.

In terms of performance, the company’s early results suggest steady progress. On MMLU-Pro, V4 Pro scored 87.5, placing it within reach of leading models, though not quite at the very top. Coding appears to be a stronger area. DeepSeek reports a 93.5 pass rate on LiveCodeBench and a Codeforces rating of 3206, pointing to solid capabilities in competitive programming.

For more demanding reasoning tasks, especially in mathematics, the model shows improvement but still trails the best closed systems in some cases. The difference, however, is becoming less pronounced.

Where the model seems to make a clearer impact is in long-context performance. It handles large-scale inputs effectively in tests like MRCR and CorpusQA, and performs at a similar level to other leading systems in agent-style tasks, including software engineering and terminal-based workflows.

The two variants are positioned differently. V4 Pro, built with 1.6 trillion parameters, is the more powerful option. V4 Flash, at 284 billion parameters, is designed to be faster and more efficient, making it better suited for production use and API-based applications.

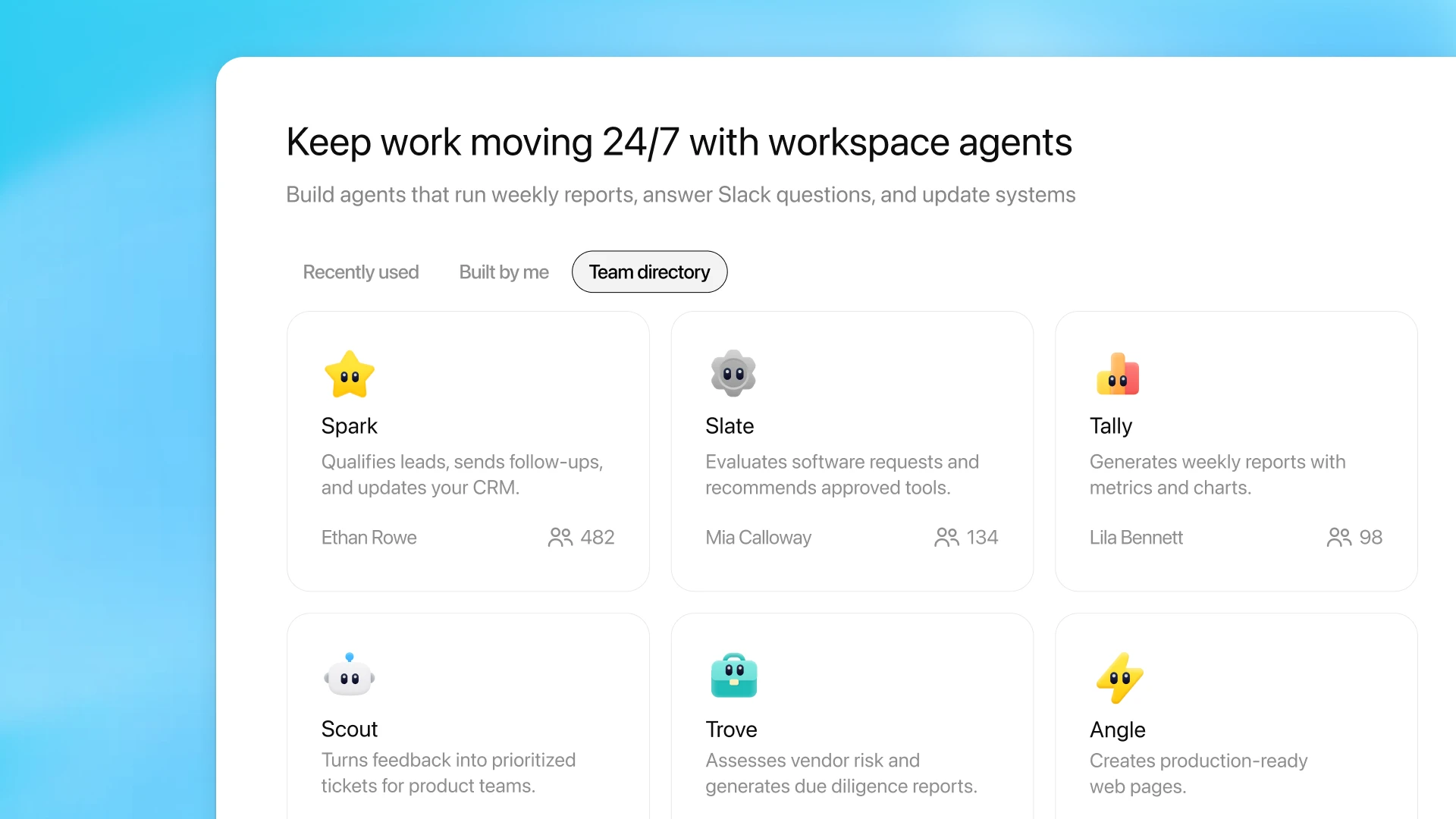

DeepSeek is also leaning into the growing role of AI agents. The company says the model is optimised to work alongside coding assistants and autonomous developer tools, and is already being used internally to support its own development workflows.

For developers, the setup is relatively straightforward. The API is live and compatible with widely used interfaces, so switching to the new models mainly involves updating the model name. Both versions also offer “thinking” and “non-thinking” modes, giving users more flexibility depending on the type of task.

Taken together, DeepSeek-V4 doesn’t claim to outperform every competitor, but it shows how quickly things are evolving. The gap with top-tier models is narrowing, and the focus on efficiency and accessibility suggests the company is trying to make advanced AI more practical to use at scale.

Also Read: Sarvam Releases Open-Weight Models Debuted at AI Summit, Compared With DeepSeek and Gemini