India’s AI infrastructure story is no longer just about adding more GPUs. It is increasingly about how efficiently those systems can run — especially in a country where heat and power costs are constant realities.

In a first-of-its-kind move, sovereign AI and cloud provider NxtGen will deploy NVIDIA H200 GPU servers powered by an advanced “Diamond Cooling” technology. The rollout integrates patented thermal innovation from San Francisco-based Akash Systems, with the aim of increasing compute density, improving energy efficiency, and lowering long-term infrastructure costs for enterprise AI workloads.

The diamond-based cooling solution is designed to complement traditional air and liquid cooling architectures rather than replace them. By transferring heat away from GPUs more effectively, it prevents thermal throttling — a common issue in high-temperature environments that reduces performance to protect hardware.

According to the companies, eliminating throttling enables sustained peak GPU output and improves effective compute performance by around 15% in warmer ambient conditions. That gain is particularly relevant for large-scale AI data centres in India, where high temperatures can impact FLOPs per watt and overall power usage effectiveness.

AS Rajgopal, Managing Director and CEO of NxtGen, said the deployment strengthens India’s AI backbone by increasing usable compute per watt and per rack. He noted that higher structural efficiency allows the company to deliver stronger performance to customers while keeping long-term infrastructure costs under control.

Diamond is known as the most thermally conductive material at room temperature, capable of removing heat up to five times faster than copper. The technology was initially proven in satellite systems, where thermal management is critical. Adapted for AI servers, it now enables sustained maximum performance at ambient temperatures of up to 50°C — compared to conventional operating norms of 23–29°C.

The companies said the deployment has delivered measurable improvements, including reduced GPU hotspot temperatures, throttle-free operation in high-heat conditions, and roughly a 15% increase in FLOPs per watt. These efficiencies translate into higher compute productivity per server and improved thermal stability.

Felix Ejeckam, Co-founder and CEO of Akash Systems, described the deployment as addressing two pressing constraints in AI infrastructure: energy consumption and capital efficiency. He said even a 15% improvement in effective GPU compute can be transformative at scale.

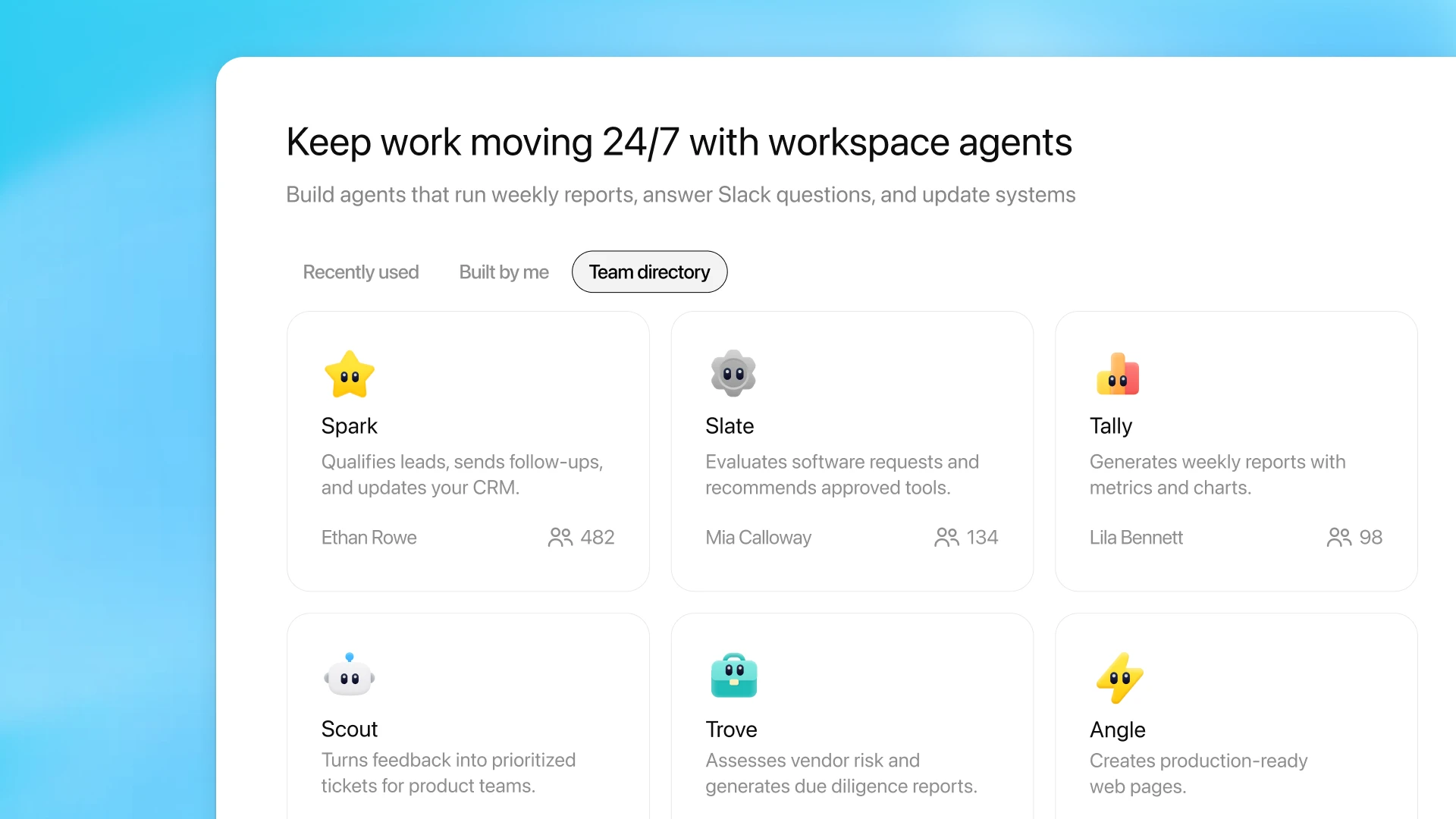

NxtGen’s broader AI platform integrates GPU architectures across NVIDIA, AMD, and Intel, offering enterprises optimised model hosting environments with sovereign data residency and compliance built in. With this deployment, the company is positioning itself to support high-performance AI workloads while addressing the growing demand for energy-efficient, cost-effective infrastructure.

Also Read: NVIDIA Invests $150 Million in AI Inference Firm Baseten